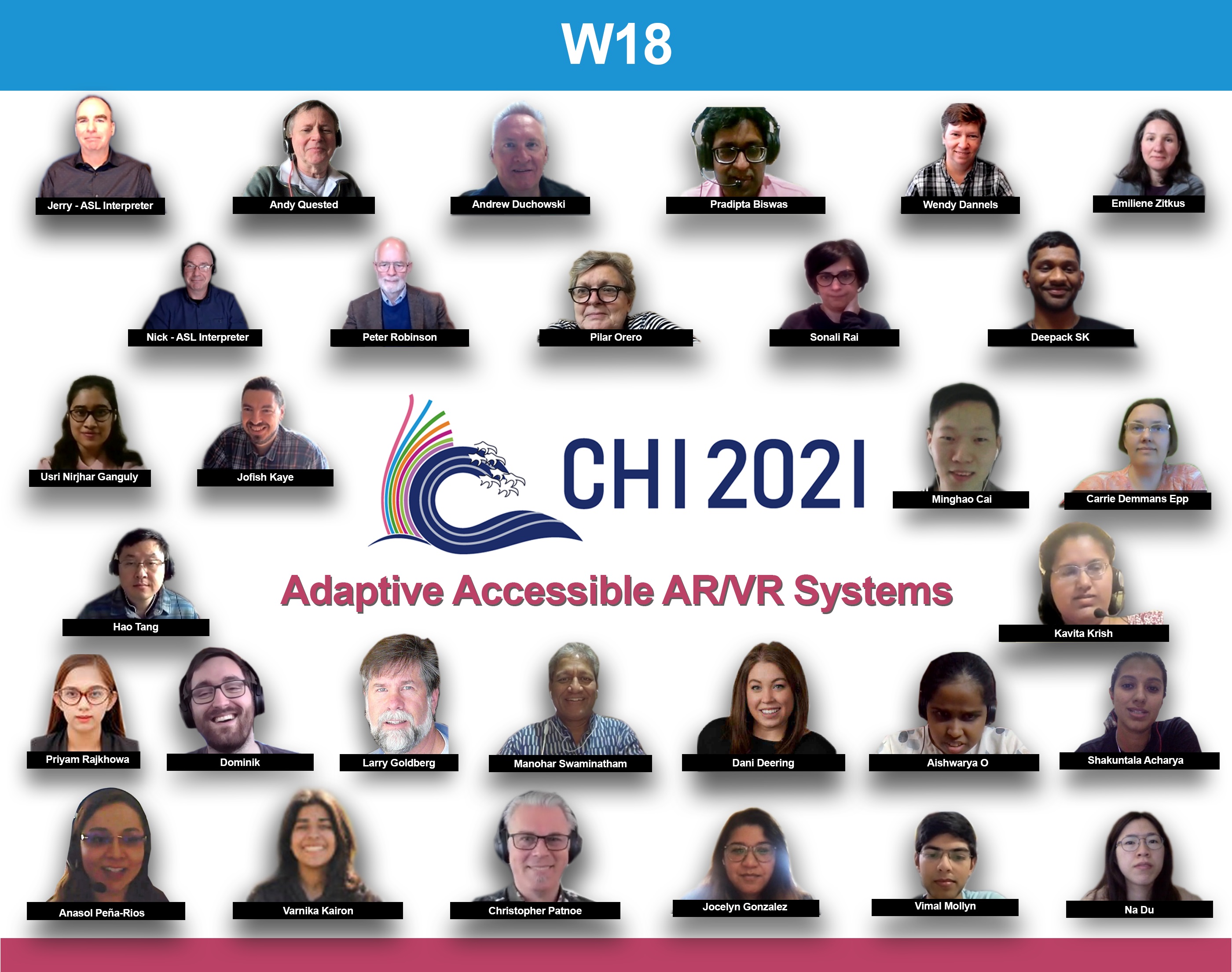

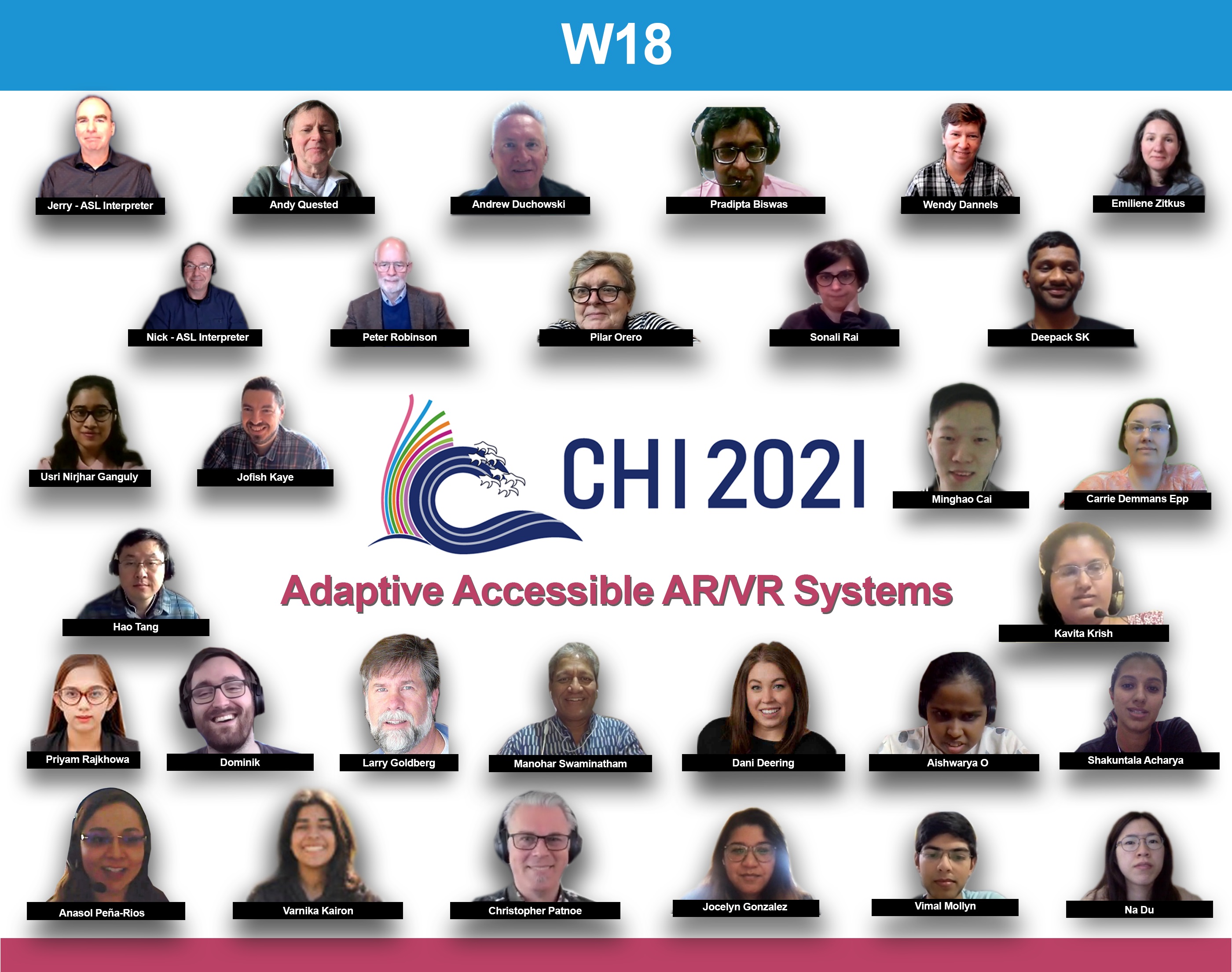

Augmented, virtual and mixed reality technologies offer new ways of interacting with digital media. However, such technologies are not well explored for people with different ranges of abilities beyond a few specific navigation and gaming applications. While new standardization activities are investigating accessibility issues with existing AR/VR systems, commercial systems still confined to specialized hardware and software limiting their widespread adoption among people with disabilities. This workshop takes a novel approach by exploring the application of user model based personalization for AR/VR systems. The workshop will be organized by experienced researchers in the field of human computer interaction, robotics control, assistive technology, and AR/VR systems, and will consist of peer reviewed papers and hands-on demonstrations. Keynote speeches and demonstrations will cover latest accessibility research at Microsoft, Google, Verizon and leading universities.

Authors of selected papers will be invited to submit extended versions of their papers to a special issue on Adaptive Inclusive AR/VR Systems of the

ACM Transactions on Accessible Computing (TACCESS)

All participants have to

register to ACM CHI Conference and add this workshop during registration with code: AccessW18

Workshop Schedule

Day 1, 7th May

- Opening Plenary, Introductions

- Personal Capability Profile: Key to Inclusive AR/VR, Swami Manohar, Microsoft Research

- Privacy Concerns of Bystanders with Augmented Reality Assistive Technologies, Priyam Rajkhowa, IISc

- Exploring Augmented Reality Games in Accessible Learning: A Systematic Review, Minghao Cai, University of Alberta

- Eye Gaze Controlled CoBot for users with SSMI, Pradipta Biswas, IISc

- The XR Access Initiative - Real Accessibility in Virtual Environments, Larry Goldberg, Verizon Media

Day 2, 8th May

- VR360 Subtitles, Pilar Orero, UAB

- Eye Tracking for XR, Andrew Duchowski, Clemson University

- The birth of augmented reality, Peter Robinson, University of Cambridge

- Impact of Visualisation in Virtual Environment, Deepak & Usri, IISc

- Designing experiences for blind and partially sighted people, Sonali Rai, RNIB

- Personal, Accessible, Immersive – my choice matters, Andy Quested, European Broadcasting Union

Day 3, 9th May

- Augmented Reality for Assistive Robots,Kavita Krishnaswamy, University of Maryland (BC)

- Hands-on Hand-in-Hand, O. Aishwarya, IIIT & Microsoft Research

- The Augmented Employee, Anasol Pena-Rios, British Telecom

- Gradually Including Potential Users, Emilene Zitkus, Loughborough University

- Immersive Captions, developing with the community,Christopher Patnoe, Google

- Group Discussion and Closing Remarks